There is a moment in every side project where the feature stops being the hard part and the plumbing quietly takes over.

For me it came on a Tuesday night, staring at a diagram with three boxes on it: a database for app data, one service for embeddings, another for vector search, and a dotted line between them labeled sync layer (TBD). I had not written a single line of sync logic yet and I already knew it would be the thing that ruined my weekends.

I was building Tannin, an iOS app that lets you scan a wine label, get a card with everything worth knowing about that bottle, and then chat with an AI sommelier.

The scan part was straightforward. The chat part was the problem.

A good sommelier does not just recite tasting notes. A good sommelier knows that 2018 was exceptional in Piedmont, that Nebbiolo wants braised meat, that Chablis works because acid cuts the cream, and that a bottle becomes more interesting when you can place it inside region, producer, vintage, and pairing context all at once. That kind of knowledge does not come from one clever prompt. It comes from a retrieval system with enough real material behind it to keep the model honest.

So I needed two things from my database.

I needed it to be a normal application database: users, scans, subscriptions, message history, transaction records.

And I needed it to be a vector store: a little over 10,000 knowledge chunks, embedded and searchable at query time.

Conventional wisdom says those are two different tools. App data in one place, vectors in another, maybe a sync job in the middle, maybe some orchestration around it, maybe a second set of credentials and dashboards while you are at it.

I did not want that setup. I am one person.

I wanted the architecture to stay honest. I wanted the product work to be the hard part, not the glue code.

That constraint pushed me toward MongoDB Atlas. The longer I built Tannin on it, the less it felt like I had chosen a vendor and the more it felt like I had chosen a shape of system I actually wanted to keep living with.

That is the real story behind the title. I am joining MongoDB as the enterprise account representative for the Pacific Northwest because I spent the last several months building a real AI app on the platform and came away with a very specific conclusion: for this category of product, keeping operational data and retrieval as close together as possible is not a nice architectural preference. It is what kept the app shippable.

What Tannin Actually Needed

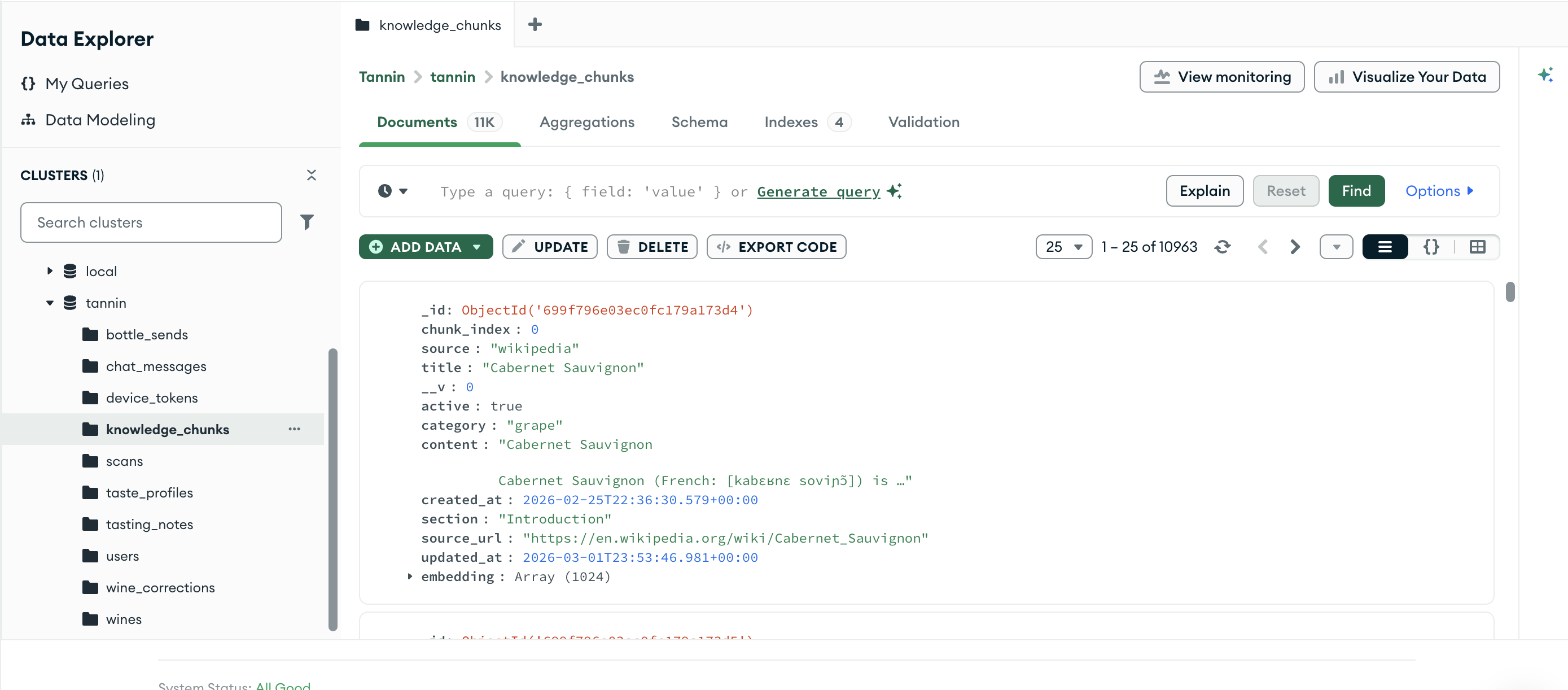

At the center of Tannin is a collection called knowledge_chunks.

Each document has a title, category, section label, content, source metadata, and an embedding vector. In practice that means grape explainers, region notes, winery material, food-pairing references, vintage-quality data, and all the little pieces of context that let the sommelier answer like something more grounded than a generic chatbot.

Today the live corpus sits at 10,949 chunks.

That number matters, but not for the usual startup-reason of trying to sound big (number go up). It matters because once you get to that size, the retrieval layer stops being a side feature and starts becoming part of the product itself. If the retrieval is weak, the app feels dumb. If the retrieval is strong, the app feels calm and trustworthy in a way people notice immediately.

The simplest version of the system is this:

- a user scans a bottle or asks a question

- the query gets embedded

- relevant chunks come back from vector search

- those chunks get injected into the sommelier's context

- the model answers in natural language

That description sounds clean because it leaves out the part where the real work lives.

The real work is in making retrieval good enough that the model stops sounding like a tour guide who only read the first paragraph of the brochure.

The Architecture Decision That Changed Everything

One thing became obvious quickly: I did not want a separate vector system living next to the operational app.

Not because that approach is impossible. It obviously works.

I did not want it because every additional system adds a tax that solo builders feel immediately:

- another bill

- another credential

- another set of API semantics

- another place where data can drift

- another component you need to trust at 11:30 p.m. when something looks off

Those costs are not theoretical when you are the only one holding the system in your head.

So I kept pushing toward the simpler shape:

- users in MongoDB

- scans in MongoDB

- messages in MongoDB

- subscriptions in MongoDB

- knowledge chunks in MongoDB

- vector search in MongoDB

That does not make the app magically easy. It just removes a class of problems that would otherwise compete with the product itself.

This is the part I think gets lost when people talk about AI architecture in the abstract. They talk as if the main question is model quality. Sometimes it is. More often, especially early on, the main question is whether the system is coherent enough that you can keep improving it without turning your nights into maintenance work.

MongoDB gave me that coherence.

What Retrieval Actually Looks Like

The retrieval path is not one global search over everything.

That turned out to matter a lot.

A naive implementation runs a single $vectorSearch over the whole corpus and takes the top results. In practice that produced lopsided answers because different kinds of context were competing in one pile. Educational explainers, pairing notes, region context, and the small amount of winery material all have different jobs to do in the answer.

So I changed the retrieval strategy.

Instead of one global search, I run parallel category-scoped searches across the same collection and the same index:

- educational content

- food-pairing material

- winery content

The query gets embedded once. Each scoped search runs with a category filter. The results get merged by score. The top handful go into the sommelier's context window.

That one change made the system feel far more intentional.

Now a user asking about malolactic fermentation can get actual conceptual context instead of a vaguely adjacent passage. A user asking what to eat with Riesling can get pairing logic instead of random tasting notes. The app feels less like "a model with search attached" and more like a real point of view on the question being asked.

None of this required a second retrieval system. It required using the database more deliberately.

That mattered to me. It still does.

The Part I Did Not Expect

The current 10,949 curated chunks are the foundation, not the ceiling.

Every scan goes into the app. Every message goes into the app. Every correction, odd query, and recurring misunderstanding leaves a trail.

That means the long-term opportunity is not just “search the original knowledge base better.” It is “let the product gradually learn from how people actually use it.”

The interesting architectural part is that this second layer of signal lives in the same system as the first.

The application data and the retrieval data are not strangers to each other.

That opens up a more interesting product path:

- the expert-curated reference layer

- the product/source layer

- the real user-interaction layer

all living close enough together that I can build against them as one product surface instead of treating them like three separate worlds.

That does not mean I am throwing raw user behavior straight into the prompt and calling it intelligence. It means the system can evolve without me inventing another data sync story every time I want the product to get sharper.

That is what I mean when I say the architecture made the app more buildable.

The Moments That Made It Real

A few moments stood out.

1. The migration that was barely a migration

I started on Railway's MongoDB Community Edition because it was cheap and I was prototyping.

When it came time to move to Atlas, I expected the kind of weekend project people like to mythologize. Instead, it was provision the cluster, run mongodump, run mongorestore, swap MONGODB_URI, and watch the app come back up.

The move itself was boring.

That is a compliment.

The Mongoose code did not change. The application did not go through an identity crisis. Once the app only knew the database through one connection string, the migration became what infrastructure changes should be when the system is designed sanely: a configuration change plus a new capability.

In this case, the new capability was Atlas Vector Search.

2. The moment the fallback stopped being needed

Before Atlas, I had written the RAG pipeline to fall back to $text search if $vectorSearch was unavailable.

That meant development worked on Community Edition, even if it was not the end state I wanted.

Then I created the Atlas vector index and waited for it to build.

A few minutes later the system quietly crossed a threshold. The fallback path stopped firing. The vector path just started working. No new deployment, no code rewrite, no feature branch. The infrastructure had caught up to the application.

That moment mattered because it showed me I had chosen a stack with an upgrade path instead of a stack that would force a rewrite the second the product got more serious.

3. The small database feature that saved future pain

One of my favorite details in Tannin is not remotely flashy.

StoreKit gives each paid subscription an original transaction ID that should be unique per user. Free-tier users have null.

That is the kind of thing that can become annoying quickly if your constraint system is clumsy.

The fix in MongoDB was a partial unique index: only enforce uniqueness when the field is a string.

That is not a headline feature. It is just one of those small pieces of leverage that keeps the app from collecting dumb edge-case debt.

And this is part of why I trust the platform more now than I did when I started. The big features got me in the door. The small boring features are what kept the system feeling livable.

What Convinced Me

I did not come away from this thinking MongoDB is magic.

You still have to make real decisions:

- how to chunk content

- how to evaluate retrieval quality

- how to organize categories

- how to think about freshness

- how to keep the model from sounding confident and wrong

None of that goes away because the platform is good.

What changed for me was narrower and more useful than that.

I came away thinking MongoDB is a very sane place to build applications that combine:

- normal operational data

- search and retrieval

- iterative product development

without forcing the builder to keep stitching the system back together.

That is a very different claim than “this tool does everything.”

It is a better claim too, because it is the one I can say honestly from experience.

I know where the platform made my life easier. I know where I still had to think. I know which questions matter because I had to answer them with my own app on the line.

That is what made this next step feel natural.

Why This Made The Next Step Feel Natural

The reason this move feels straightforward is that I did not come to it from a slide deck. I came to it by building a real AI product, watching where the architecture got simpler, and paying attention to which tradeoffs were actually worth making.

Enterprise teams evaluating MongoDB for AI workloads are asking the same questions I asked:

- do I really need a separate vector database?

- how painful is it to move from prototype to managed infrastructure?

- what breaks when retrieval becomes part of the app?

- how much complexity am I signing up for if I want one product to do more than one kind of work?

I have lived those questions from the builder side. That is the only credential I care about bringing into the role.

What appeals to me about joining MongoDB is simple: I get to spend the next chapter helping other teams through the same architectural decisions that mattered in Tannin. Not in the abstract. Not from a generic AI deck. From the perspective of someone who actually had to decide what to store, what to index, what to retrieve, what to migrate, and what kinds of complexity were worth carrying.

I built Tannin on MongoDB. Now I get to help other teams do the same. If you are building in the Pacific Northwest and want to talk, I would love to.