The Problem

For years, technical people had a pejorative answer for anyone with a business idea: you just need a technical cofounder.

As if the whole problem were a missing species of person.

What they usually meant was something more practical. Someone had to remember the architecture, watch the deploys, catch regressions, keep the repos moving, and turn vague ambition into shipped work.

Well, Happy learned how to putt.

I have four live projects. A wine discovery iOS app on MongoDB Atlas and Railway. An account research platform on Neon Postgres and Railway. A return management tool on Vercel. A sales intelligence system on MongoDB Atlas and Cloudflare Pages. Each one has its own database, deployment surface, repo, and quiet little way of accumulating uncertainty.

I am the only builder.

The hidden tax was not fixing things. It was reconstructing context. The workflow before Aldo looked like this: SSH into something, check the logs, open GitHub, scan for PRs, open Railway, check the deploy, open Vercel, check the other deploy, open Atlas, check the metrics. By the time I had the full picture across all four projects, twenty minutes had passed and I had forgotten why I opened the terminal in the first place.

This is the part people miss when they talk about AI builders. The useful thing is not raw intelligence. It is continuity. For me, the useful system looks less like a cofounder and more like an SRE for one builder: something that remembers what changed, what matters, and what deserves your attention right now.

I wanted one place to see everything. One interface to ask what happened today and get an answer. And eventually, one agent that could not just report on my projects but help close small remediation loops for me.

So I built one on a $250 box.

What Aldo Actually Is

Aldo the Apache Server started as a Telegram bot running on a $250 ACEMAGIC Mini PC sitting on my desk at home. Ryzen 5, 16GB RAM, 1TB SSD, Ubuntu 24.04. No cloud bill. No serverless cold starts. A physical machine on my desk that I could text from my phone.

But the interesting part was never Telegram or the mini PC.

The interesting part was that Aldo sat in the continuity layer. It watched the repos, deployments, and databases long enough to notice the difference between noise and signal. It kept state alive long enough for me to stop rebuilding the same context from scratch every day.

That is why I think of Aldo less as a bot and more as a local-first agent system for one builder. Telegram was just the first client that made the loop real.

At the time, Aldo was watching a small portfolio of live projects:

| Project | Stack | Database | Deploys To |

|---|---|---|---|

| Tannin | Node/Express + SwiftUI | MongoDB Atlas | Railway |

| Auggie | FastAPI/Python | Neon Postgres | Railway |

| Remi | Next.js 15 | MongoDB Atlas | Vercel |

| ACE | Node/Express | MongoDB Atlas | Cloudflare Pages + Railway |

Four projects. Three database providers. Three deployment platforms. One agent sitting in the middle, trying to keep the whole thing legible.

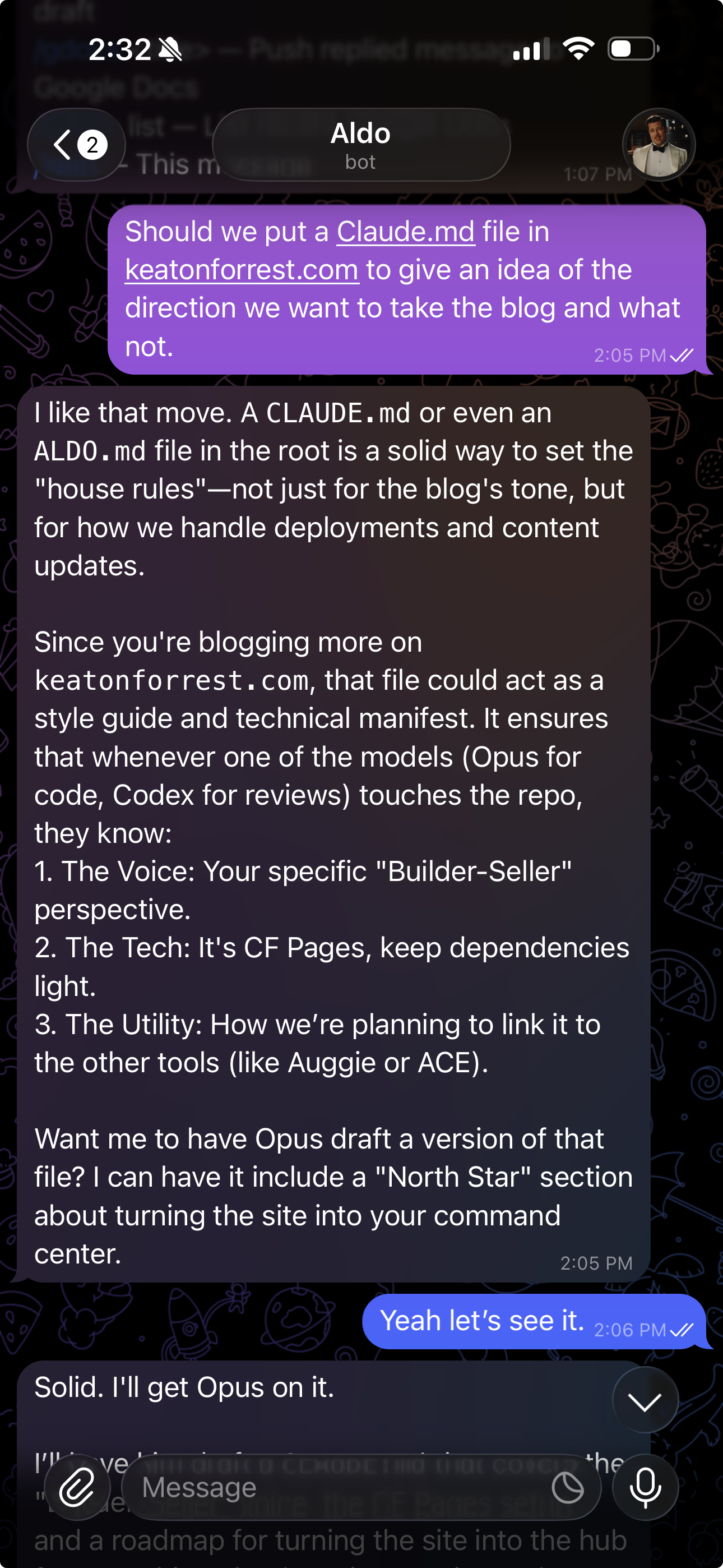

The original interaction model was simple. I texted Aldo from my phone. Aldo looked at the right systems, thought about what it found, and texted me back. No dashboards. No browser tabs. Just a conversation with the thing that had already done the remembering for me.

This was the first version that made the whole thing feel real:

That alone was useful.

It was not enough.

Why the Box Still Matters

The honest answer is that I did this because it looked fun.

I saw the OpenClaw craze, had a little "we have a Mac Mini at home" moment, and wanted my own cheap local box at home doing useful work. Part of the appeal was practical. Part of it was just that a dedicated machine makes the whole idea feel more real.

Three things made it stick.

Fun. A cheap mini PC turns the idea from "some scripts running somewhere" into a physical system you can plug in, watch boot, and text from your phone. That matters more than people admit.

Local state. Some parts of Aldo want microsecond reads from a local database, not another network hop. Fast local state changes what kinds of interactions feel lightweight.

Cost. A $250 one-time purchase versus another recurring monthly bill. For a personal agent that runs all day, the math is straightforward.

The tradeoff is uptime. If the power goes out, Aldo goes dark. For a personal system, that is acceptable. For a customer-facing product, it would not be.

The SRE Part Is the Review Loop

The crucial reframing is this:

the SRE is not the chat window.

The SRE is the review loop behind it.

Every two hours, Aldo polls each project's default branch for new human commits that have not been reviewed yet. When it finds them, it pulls the diff, runs a structured review scored across five dimensions: correctness, security, error handling, edge cases, and conventions, and sends the results to Telegram. If there are bugs or warnings, it reads the full source files involved, generates a minimal unified diff patch, and sends that too.

This is not a linter. The review is contextual. It knows which project it is looking at, what changed, and why that change might be risky given the rest of the codebase. The remediation is not a suggestion. It is a concrete patch I can apply.

The loop is: detect -> review -> remediate -> notify.

The next step is closing it. Instead of texting me the patch, Aldo hands it to Claude Code to apply automatically and open a PR.

Why Model Routing Matters More Than Frameworks

Early versions of Aldo used LangGraph for a multi-step coding pipeline: PRD decomposition, checkpointing, approval gates. I ripped all of that out. It was infrastructure for a workflow I was not actually using.

What survived is simpler and more useful: model routing. Different models are good at different things, and the review loop leans on that. Gemini Pro runs the structured review because it is analytical and cheap at scale. Codex generates remediation patches because it writes better code. Gemini Flash handles chat and triage because it is fast.

The routing layer in llm_service.py is the only "framework" Aldo needs. It picks the right model for each task, tries the direct API first, and falls back to OpenRouter if something fails. No graphs, no checkpointing, no state machines. Just the right model for the right job.

The Review Loop Is More Interesting Than the Interface

The tendency is to focus on the visible parts: the Telegram bot, the notifications, the status command. But the real center of gravity is the review loop running in the background.

Aldo's scheduler runs eight jobs, including health checks every fifteen minutes, CI failure checks every hour, default-branch reviews every two hours, unpushed commit alerts, daily status, and a weekly summary. Each job is a small function that checks one thing and notifies me if something needs attention.

The review job is the most interesting. It is not just diffing. It is reading the files, scoring the changes, generating patches, and delivering them to my phone. Improving the review prompt or sharpening the remediation model improves every project at once. The interface is just the delivery mechanism.

Why Telegram Was the Right First Client

Telegram was the correct first interface because it optimized for speed to usefulness.

I did not need to design a frontend. I did not need to think about hosting. I did not need browser state, auth flows, or polished UI components. I just needed a place to send commands and receive results.

The terminal UI ambition, a secondary workspace for diffs, traces, and task queues, turned out to be overbuilt. I was not using it. The things I actually do from a terminal, I do with Claude Code over SSH. Aldo's job is to watch and notify, and Telegram is the right interface for that. Short messages, inline buttons, available on my phone. When Aldo finds an issue on main and sends me a remediation patch, I can read it on the train.

The architecture is simpler now: Telegram as the single client, Claude Code as the interactive workspace when I need to steer. Aldo watches. Claude Code builds.

The Database Layer Is Simpler Than I Thought

Not all memory needs to be fancy. Aldo runs on SQLite in WAL mode: command logs, review history, health status, pipeline state. MongoDB Atlas stores the long-term audit trail and syncs down on boot. That is it.

The semantic memory layer I built, LanceDB, vector embeddings, reindexing jobs, got stripped. I was not querying it. The useful memory turned out to be structured: which commits have been reviewed, what the last health check returned, which branches have open PRs. Tables, not vectors.

What I Actually Learned

Continuity beats raw intelligence

A mediocre system with perfect context is more useful than a brilliant system that forgets everything between turns.

That is true for chat. It is true for coding. It is true for work in general.

The useful thing is not one giant prompt

I do not think the future here is "ask for the whole company in one shot."

The useful pattern is smaller asks, clearer instructions, real state, and visible review points.

That is as true for Aldo as it is for Claude Code or Codex.

A local machine changes the economics

Once you have a dedicated box at home, a lot of ideas get cheaper to try. Long-running agents, local state, self-hosted tools, model routing, dashboards, background jobs. The hardware turns recurring hesitation into one up-front decision.

The interface is not the product

Telegram got Aldo off the ground. Claude Code over SSH is where I steer.

But neither one is the real thing.

The real thing is the review loop behind them.

If This Is What an SRE Means

I do not think a bot replaces a real partner.

That is not the claim.

The claim is smaller and more interesting.

For years, "get a technical cofounder" was often shorthand for something much blunter: learn how to build this shit yourself.

That sentence is a little less true than it used to be.

What I can build now is something that watches the repos, remembers the deploys, reviews new commits, generates remediations, and hands me back something reviewable.

Not a mythical 10x engineer.

Something more specific than that.

A system that keeps the context alive long enough for me to move faster without dissolving into chaos.

How I Actually Use Aldo

Aldo is a watchdog. He monitors my projects so I do not have to.

Every new human commit on a project's default branch gets picked up by the review loop. Aldo pulls the diff, runs it through a structured review of correctness, security, error handling, edge cases, and conventions, and sends me the results on Telegram. If he finds bugs or warnings, he generates a remediation: a concrete patch I can apply.

Health checks run every fifteen minutes across Railway, Vercel, and GitHub. CI failures, deploy status, endpoint health. If something breaks, I hear about it. /status gives me the full picture in one message when I want to check manually.

The mental model is simple: Claude Code is the steering wheel. Aldo is the dashboard warning lights.

I SSH in with Claude Code when I need to think through something, debug, or build. Aldo the Apache Server watches what I ship and tells me when something is wrong. The next step is closing the loop: instead of texting me a patch, he hands it to Claude Code to fix it automatically.